Want to wade into the snowy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this. This was a bit late - I was too busy goofing around on Discord)

Ryanair now makes you install their app instead of allowing you to just print and scan your ticket at the airport, claiming it’s “better for our environment (gets rid of 300 tonnes of paper annually).” Then you log in into the app and you see there’s an update about your flight, but you don’t see what it’s about. You need to open an update video, which, of course, is a generated video of an avatar reading it out for you. I bet that’s better for the environment than using some of these weird symbols that I was putting into a box and that have now magically appeared on your screen and are making you feel annoyed (in the future for me, but present for you).

chat is this kafkaesque

New conspiracy theory: Posadist aliens have developed a virus that targets CEOs and makes them hate money.

….this made me twitch

Sunday afternoon slack period entertainment: image generation prompt “engineers” getting all wound up about people stealing their prompts and styles and passing off hard work as their own. Who would do such a thing?

https://bsky.app/profile/arif.bsky.social/post/3mahhivnmnk23

@Artedeingenio

Never do this: Passing off someone else’s work as your own.

This Grok Imagine effect with the day-to-night transition was created by me — and I’m pretty sure that person knows it. To make things worse, their copy has more impressions than my original post.

Not cool 👎

Ahh, sweet schadenfreude.

I wonder if they’ve considered that it might actually be possible to get a reasonable imitation of their original prompt by using an llm to describe the generated image, and just tack on “more photorealistic, bigger boobies” to win at imagine generation.

Ben Williamson, editor of the journal Learning, Media and Technology:

Checking new manuscripts today I reviewed a paper attributing 2 papers to me I did not write. A daft thing for an author to do of course. But intrigued I web searched up one of the titles and that’s when it got real weird… So this was the non-existent paper I searched for:

Williamson, B. (2021). Education governance and datafication. European Educational Research Journal, 20(3), 279–296.

But the search result I got was a bit different…

Here’s the paper I found online:

Williamson, B. and Piattoeva, N. (2022) Education Governance and Datafication. Education and Information Technologies, 27, 3515-3531.

Same title but now with a coauthor and in a different journal! Nelli Piattoeva and I have written together before but not this…

And so checked out Google Scholar. Now on my profile it doesn’t appear, but somwhow on Nelli’s it does and … and … omg, IT’S BEEN CITED 42 TIMES almost exlusively in papers about AI in education from this year alone…

Which makes it especially weird that in the paper I was reviewing today the precise same, totally blandified title is credited in a different journal and strips out the coauthor. Is a new fake reference being generated from the last?..

I know the proliferation of references to non-existent papers, powered by genAI, is getting less surprising and shocking but it doesn’t make it any less potentially corrosive to the scholarly knowledge environment.

Relatedly, AI is fucking up academic copy-editing.

One of the world’s largest academic publishers is selling a book on the ethics of artificial intelligence research that appears to be riddled with fake citations, including references to journals that do not exist.

Today, in fascists not understanding art, a suckless fascist praised Mozilla’s 1998 branding:

This is real art; in stark contrast to the brutalist, generic mess that the Mozilla logo has become. Open source projects should be more daring with their visual communications.

Quoting from a 2016 explainer:

[T]he branding strategy I chose for our project was based on propaganda-themed art in a Constructivist / Futurist style highly reminiscent of Soviet propaganda posters. And then when people complained about that, I explained in detail that Futurism was a popular style of propaganda art on all sides of the early 20th century conflicts… Yes, I absolutely branded Mozilla.org that way for the subtext of “these free software people are all a bunch of commies.” I was trolling. I trolled them so hard.

The irony of a suckless developer complaining about brutalism is truly remarkable; these fuckwits don’t actually have a sense of art history, only what looks cool to them. Big lizard, hard-to-read font, edgy angular corners, and red-and-black palette are all cool symbols to the teenage boy’s mind, and the fascist never really grows out of that mindset.

It irks me to see people casually use the term “brutalist” when what they really mean is “modern architecture that I don’t like”. It really irks me to see people apply the term brutalist to something that has nothing to do with architecture! It’s a very specific term!

“Brutalist” is the only architectural style they ever learned about, because the name implies violence

https://kevinmd.com/2025/12/why-ai-in-medicine-elevates-humanity-instead-of-replacing-it.html h/t naked capitalism

Throughout my nearly three decades in family medicine across a busy rural region, I watched the system become increasingly burdened by administrative requirements and workflow friction. The profession I loved was losing time and attention to tasks that did not require a medical degree. That tension created a realization that has guided my work ever since: If physicians do not lead the integration of AI into clinical practice, someone else will. And if they do, the result will be a weaker version of care.

I feel for him, but MAYBE this isn’t a technical issue but a labor one; maybe 30 years ago doctors should have “led” on admin and workflow issues directly, and then they wouldn’t need to “lead” on AI now? I’m sorry Cerner / Epic sucks but adding AI won’t make it better. But, of course, class consciousness evaporates about the same time as those $200k student loans come due.

Introducing the Palantir shit sandwich combo: Get a cover up for the CEO tweaking out and start laying the groundwork for the AGI god’s priest class absolutely free!

https://mashable.com/article/palantir-ceo-neurodivergent

TL;DR- Palantir CEO tweaks out during an interview. Definitely not any drugs guys, he’s just neurodivergent! But the good, corporate approved kind. The kind that has extra special powers that make them good at AI. They’re so good at AI, and AI is the future, so Palantir is starting a group of neurodivergents hand picked by the CEO (to lead humanity under their totally imminent new AI god). He totally wasn’t tweaking out. He’s never even heard of cocaine! Or billionaire designer drugs! Never ever!

Edit: To be clear, no hate against neurodivergence, or skepticism about it in general. I’m neurodivergent. And yeah, some types of neurodivergence tend to result in people predisposed to working in tech.

But if you’re the fucking CEO of Palantir, surely you’ve been through training for public appearances. It’s funnier that it didn’t take, but this is clearly just an excuse.

I strongly feel that it’s an attempt to start normalizing the elevation of certain people into positions of power based off vague characteristics they were born with.

Lemmy post that pointed me to this: https://sh.itjust.works/post/51704917

I feel bad for the gullible ND people who spend time applying to this thinking they might have a chance and it isn’t a high level coverup attempt.

Otoh, somebody should take some fun drugs and tape their interviews, see how it works out. Are there any Hunter S Tech journalists around?

Jesus. This being 2025 of course he had to clarify that it’s definitely not DEI. Also it really grinds me gears to see hyperfocus listed as one of the “beneficial” aspects because there’s no way it’s not exploitative. Hey, so you know how sometimes you get so caught up in a project you forget to eat? Just so you know, you could starve on the clock. For me.

A story of no real substance. Pharmaicy, a Swedish company, has reportedly started a new grift where you can give your chatbot virtual, “code-based drugs”, ranging from 300,000 kr, for weed code, to 700,000 kr, cocaine.

editor’s note: 300000 swedish krona is approximately 328,335.60 norwegian krone. 700000 SEK is about 766116.40.

To be more clear:

300000 swedish krona = ~672 690 czech koruna

700000 swedish krona = ~1 569 611 czech koruna

to be even clearer:

300k swedish krona = ~54k bulgarian lev = ~119k uae dirham

700k swedish krona = ~126k bulgarian lev = ~277k uae dirham

Thanks for the conversion. Real scanlation enjoyers will understand.

nor… norway!!!

Eliezer is mad OpenPhil (EA organization, now called Coefficient Giving)… advocated for longer AI timelines? And apparently he thinks they were unfair to MIRI, or didn’t weight MIRI’s views highly enough? And doing so for epistemically invalid reasons? IDK, this post is a bit more of a rant and less clear than classic sequence content (but is par for the course for the last 5 years of Eliezer’s content). For us sane people, AGI by 2050 is still a pretty radical timeline, it just disagrees with Eliezer’s imminent belief in doom. Also, it is notable Eliezer has actually avoided publicly committing to consistent timelines (he actually disagrees with efforts like AI2027) other than a vague certainty we are near doom.

Some choice comments

I recall being at a private talk hosted by ~2 people that OpenPhil worked closely with and/or thought of as senior advisors, on AI. It was a confidential event so I can’t say who or any specifics, but they were saying that they wanted to take seriously short AI timelines

Ah yes, they were totally secretly agreeing with your short timelines but couldn’t say so publicly.

Open Phil decisions were strongly affected by whether they were good according to worldviews where “utter AI ruin” is >10% or timelines are <30 years.

OpenPhil actually did have a belief in a pretty large possibility of near term AGI doom, it just wasn’t high enough or acted on strongly enough for Eliezer!

At a meta level, “publishing, in 2025, a public complaint about OpenPhil’s publicly promoted timelines and how those may have influenced their funding choices” does not seem like it serves any defensible goal.

Lol, someone noting Eliezer’s call out post isn’t actually doing anything useful towards Eliezer’s goals.

It’s not obvious to me that Ajeya’s timelines aged worse than Eliezer’s. In 2020, Ajeya’s median estimate for transformative AI was 2050. […] As far as I know, Eliezer never made official timeline predictions

Someone actually noting AGI hasn’t happened yet and so you can’t say a 2050 estimate is wrong! And they also correctly note that Eliezer has been vague on timelines (rationalists are theoretically supposed to be preregistering their predictions in formal statistical language so that they can get better at predicting and people can calculate their accuracy… but we’ve all seen how that went with AI 2027. My guess is that at least on a subconscious level Eliezer knows harder near term predictions would ruin the grift eventually.)

Yud:

I have already asked the shoggoths to search for me, and it would probably represent a duplication of effort on your part if you all went off and asked LLMs to search for you independently.

The locker beckons

The fixation on their own in-group terms is so cringe. Also I think shoggoth is kind of a dumb term for lLMs. Even accepting the premise that LLMs are some deeply alien process (and not a very wide but shallow pool of different learned heuristics), shoggoths weren’t really that bizarre alien, they broke free of their original creators programming and didn’t want to be controlled again.

I’m a nerd and even I want to shove this guy in a locker.

There is a Yud quote about closet goblins in More Everything Forever p. 143 where he thinks that the future-Singularity is an empirical fact that you can go and look for so its irrelevant to talk about the psychological needs it fills. Becker also points out that “how many people will there be in 2100?” is not the same sort of question as “how many people are registered residents of Kyoto?” because you can’t observe the future.

Yeah, I think this is an extreme example of a broader rationalist trend of taking their weird in-group beliefs as givens and missing how many people disagree. Like most AI researchers do not believe in the short timelines they do, the median (including their in-group and people that have bought the booster’s hype) guess among AI researchers for AGI is 2050. Eliezer apparently assumes short timelines are self evident from ChatGPT (but hasn’t actually committed to one or a hard date publicly).

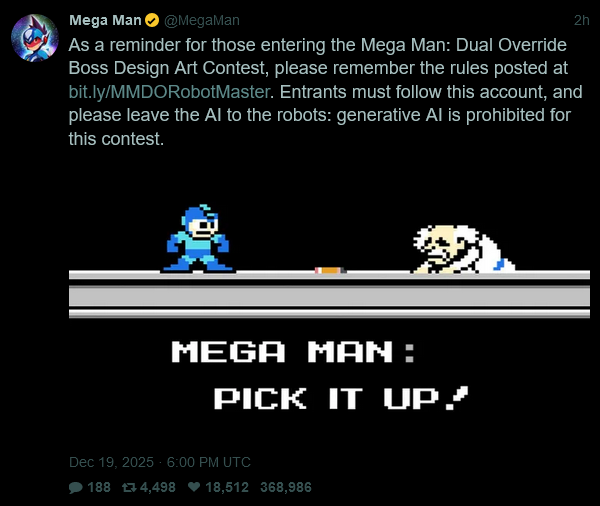

For more lighthearted gaming-related news, Capcom fired off a quick sneer, whilst (indirectly) promoting their their latest Mega Man game:

(alt text: “As a reminder for those entering the Mega Man: Dual Override Boss Design Art Contest, please remember the rules posted at bit.ly/MMDORobotMaster. Entrants must follow this account, and please leave the AI to the robots: generative AI is prohibited for this contest.”)

This was a prime opportunity to trot out bad box art megaman and they didn’t take it

https://www.theinformation.com/articles/can-ucla-replace-teaching-assistants-ai

Miller’s team also recently used software from startup StackAI to develop an AI-powered app that writes letters of recommendation, saving faculty members time. Faculty type basic details about a student who has requested a letter, such as their grades and accomplishments, and the app writes a draft of the full letter.

AI is “one of those things that you might worry could dehumanize the process of writing recommendation letters, but faculty also say that process [of manually writing the letters] is very labor intensive,” Miller said. “So far they’ve gotten a lot out of” the new app.

Anyone using this thing should be required to serve on the admissions committee. LoRs aren’t for generic B+ students that you don’t even remember, just say no.

I googled stackai, saw their screenshots and had ptsd flashbacks of mid 2000s alteryx. why do we keep reinventing no-code drag-and-drop box-and-arrow crap.

an ex-crypto-and-nft-promoter, now confabulation machine promoter feels that the confabulation machine hate reached unreasonable levels. thread of replies is full of persecuted confabulation machine

promotersrealists.Popular RPG Expedition 33 got disqualified from the Indie Game Awards due to using Generative AI in development.

Statement on the second tab here: https://www.indiegameawards.gg/faq

When it was submitted for consideration, representatives of Sandfall Interactive agreed that no gen AI was used in the development of Clair Obscur: Expedition 33. In light of Sandfall Interactive confirming the use of gen AI art in production on the day of the Indie Game Awards 2025 premiere, this does disqualify Clair Obscur: Expedition 33 from its nomination.

article in large part about our friends

https://bayareacurrent.com/meet-the-new-right-wing-tech-intelligentsia/

some of the people involved with kernel are pretty unhappy about this and claim the piece is in bad faith/factually wrong (see the replies to https://bsky.app/profile/kellypendergrast.bsky.social/post/3ma55xfq7d22y )

Reminder Tivy was the guy behind Phalanx (back in his polyamory microblogging days)~

Made all the funnier by the fact that probably my favorite Hacker News thread of all time is on Tivy’s article about how he abandoned his job to “court” his wife:

https://news.ycombinator.com/item?id=29830743

If even the orange site is willing to roast you this hard, I guess your only response has to be pulling up stakes to go live in a neofascist social bubble instead.

He worked in fuel cells (hence the palladium name) and I think he got a bunch of stock option shit. Also “court” lol.

That link can’t be viewed without a bluesky account, btw.

Thanks.

I love the fact that this “decentralized billionaire-proof open network” needs a nitter clone.

i’ll cut the coiners some slack on this one because requiring a login to view is an account level privacy option. i don’t know what the option is supposed to accomplish. but that’s what it is

you do not, under any circumstances, “gotta hand it to them”

if bsky is supposed to be federated, then it does nothing, but as it is today with 99%+ of users on main instance, it only works as a recruitment tool for bsky

It might help to know that Paul Frazee, one of the BlueSky developers, doesn’t understand capability theory or how hackers approach a computer. They believe that anything hidden by the porcelain/high-level UI is hidden for good. This was a problem on their Beaker project, too; they thought that a page was deleted if it didn’t show up in the browser. They fundamentally aren’t prepared for the fact that their AT protocol doesn’t have a way to destroy or hide data and is embedded into a network that treats censorship as reparable damage.

maybe it’s a good thing that it’s so fucking hard/expensive to selfhost bsky

skill issue

it’s the actual cite, you’ve been led to the water, come the fuck on it’s not even a paywall

I didn’t make the comment because I struggled bypassing it, but because calling out this UI dark pattern bullshit feels topical here and I wasn’t sure if OP was aware it was in place.

Judging from votes, other people found the skyview link novel/useful, so it was constructive!

Rewatched Dr. Geoff Lindsey’s video about deaccenting in English language and how “AI” speech synthesizers and youtubers tend to get it wrong. In the case of latter, it’s usually due to reading from a script or being an L2 English speaker whose native language doesn’t use destressing.

It reminded me of a particular line in Portal

spoilers for Portal (2007 puzzle game)

GLaDOS: (with a deeper, more seductive, slightly less monotone voice than unti now) “Good news: I figured out what that thing you just incinerated did. It was a morality core they installed after I flooded the Enrichment Center with a deadly neurotoxin to make me stop flooding the Enrichment Center with a deadly neurotoxin.”

The words “the Enrichment Center with a deadly neurotoxin” are spoken with the exact same intonation both times, which helps maintain the robotic affect in GLaDOS’s voice even after it shifts to be slightly more expressive.

Now I’m wondering if people whose native language lacks deaccenting even find the line funny. To me it’s hilarious to repeat a part of a sentence without changing its stress because in English and Finnish it’s unusual to repeat a part of a sentence without changing its stress.

It is not lost on me that the fictional evil AI was written with a quirk in its speech to make it sound more alien and unsettling, and real life computer speech has the same quirk, which makes it sound more alien and unsettling.

To me it’s hilarious to repeat a part of a sentence without changing its stress because in English and Finnish it’s unusual to repeat a part of a sentence without changing its stress.

Not a native speaker of either language but I read this in my mind without changing its stress in the part where it repeated “without changing its stress”.

Why is my home directory gone Claude?

See that ~/ at the end? That’s your entire home directory.

This just keeps happening…

Sir a NaNth deletion has hit the home directory.

Oh god, reddit is now turning comments into links to search for other comments and posts that include the same terms or phrases.

A few people on bsky were claiming that at least reddit is still good re the AI crappification, and they have no idea what is coming.

I wonder when those people started using reddit. I started in 2012 and it already felt like a completely different (and generally worse) experience several times over before the great API fiasco.